|

A symmetric matrix has symmetric entries with respect to the main diagonal. Furthermore, it is possible only for square matrices to be symmetric because equal matrices have equal dimensions. Question 5: What is meant by a symmetric matrix?Īnswer: A symmetric matrix refers to a square matrix whose transpose is equal to it. Question 4: Can we say that a zero matrix is invertible?Īnswer: No, a zero matrix is not invertible. In other words, we can say that a scalar matrix is an identity matrix’s multiple. In a scalar matrix, all off-diagonal elements are equal to zero and all on-diagonal elements happen to be equal. The various types of matrices are row matrix, column matrix, null matrix, square matrix, diagonal matrix, upper triangular matrix, lower triangular matrix, symmetric matrix, and antisymmetric matrix.Īnswer: The scalar matrix is similar to a square matrix. These rows and columns define the size or dimension of a matrix. Question 2: What is meant by matrices and what are its types?Īnswer: Matrix refers to a rectangular array of numbers. For example, $$ A =\begin$$ is a diagonal matrix is a diagonal matrix A matrix is said to be a row matrix if it has only one row. Some of them are as follows:Ī row matrix has only one row but any number of columns. If your matrix is of a very small fixed size (at most 4x4) this allows Eigen to avoid performing a LU decomposition, and instead use formulas that are more efficient on such small matrices.Different types of Matrices and their forms are used for solving numerous problems.

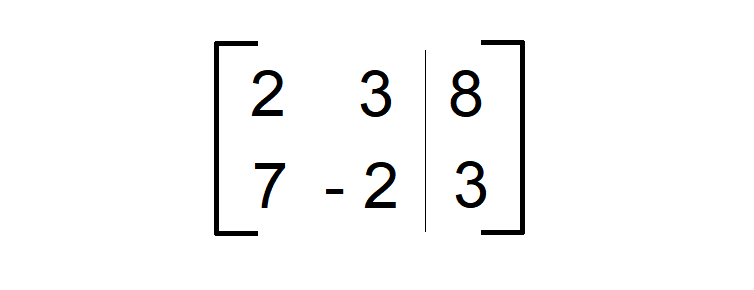

While certain decompositions, such as PartialPivLU and FullPivLU, offer inverse() and determinant() methods, you can also call inverse() and determinant() directly on a matrix. To multiply a matrix by a scalar, multiply all of the elements by the scalar. Learn how to multiply a matrix by a scalar. dim ( A) m x n represents a matrix with m rows and n columns. The dimension of matrix A, dim ( A ), is how many rows and columns it has. However, for very small matrices, the above may not be true, and inverse and determinant can be very useful. Learn what is meant by the dimension of a matrix. Inverse computations are often advantageously replaced by solve() operations, and the determinant is often not a good way of checking if a matrix is invertible. While inverse and determinant are fundamental mathematical concepts, in numerical linear algebra they are not as useful as in pure mathematics. Here's a matrix whose columns are eigenvectors of Aįirst of all, make sure that you really want this. Here's an example, also demonstrating that using a general matrix (not a vector) as right hand side is possible: Example: If you know that your matrix is also symmetric and positive definite, the above table says that a very good choice is the LLT or LDLT decomposition.

For example, a good choice for solving linear systems with a non-symmetric matrix of full rank is PartialPivLU.

If you know more about the properties of your matrix, you can use the above table to select the best method.

Īll of these decompositions offer a solve() method that works as in the above example. To get an overview of the true relative speed of the different decompositions, check this benchmark. Here is a table of some other decompositions that you can choose from, depending on your matrix, the problem you are trying to solve, and the trade-off you want to make: Decomposition It's a good compromise for this tutorial, as it works for all matrices while being quite fast. Here, ColPivHouseholderQR is a QR decomposition with column pivoting.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed